Why Do We Need Edge Computing?

Why Do We Need Edge Computing?

- Last Updated: December 2, 2024

Eric Simone

- Last Updated: December 2, 2024

The past 15-20 years have generated a massive shift from on-premise software to cloud computing. We can now access everything we need from anywhere, without the limitations of fixed-location servers. However, the cloud computing movement is about to swing back the other way towards decentralized computing. So why do we need edge computing?

Given the massive opportunities generated by cloud networks, that concept might seem counterintuitive. But in order for us to move to the next generation and take advantage of all the Internet of Things (IoT) has to offer, technology has to become local again.

Take a look at agricultural history to draw some parallels. A century or more ago, people consumed foods that were cultivated in their local area. If it didn’t grow or breed within 50-100 miles of where you lived, you probably wouldn’t have the opportunity to eat it.

Then, technology came along and opened new doors. Transportation got a lot faster, refrigeration meant food could travel without spoiling, and new farming techniques allowed for mass production. With these developments, consumers can access foods from all over the world.

We’re still taking advantage of easy access to global foods, but there’s been a shift back to local sources for a number of reasons. Shipping foods over long distances impacts the environment. Consumers want to contribute to their local economy. And many of us want fewer artificial ingredients in the foods we consume.

So what does that mean for cloud computing? Just like global food access, cloud computing isn’t going away completely. But, where the processing regularly happens will move from the cloud to what’s now called “the edge”.

What is Edge Computing?

If we think back to understanding the cloud, it made sense to compare it to on-premise computing. On-premise computing meant data stored and managed centrally on a corporate mainframe or server. Cloud computing translates to data storage and processing on a series of remote servers - off “in the clouds”, so to speak.

So if cloud computing happens on remote servers, edge computing happens closer to the action it’s recording. Edge computing encompasses sensors that collect data (such as RFID tags), an on-site data center, and the network that connects them all to power local computing. Data processing happens at the source, far away from the cloud - or at the “edge”. Edge computing networks can still connect to the cloud when necessary, but they don’t *need* the cloud to function.

[bctt tweet="Edge computing wins out over cloud processing when time-sensitive events are happening." username="iotforall"]

Even now, consumers are already using a variety of IoT devices that could be considered edges. You might have a Nest Thermostat that controls the climate in your home, a FitBit that measures personal fitness, or even Alexa or Google Home as a personal assistant. But for these devices, there’s no critical event to address. You can wait for your request to Alexa to be processed by the cloud.

Edge computing wins out over cloud processing when time-sensitive events are happening. In order for self-driving cars to become a reality, those cars need to react to external factors in real-time. If a self-driving car is traveling down a road and a pedestrian walks out in front of the car, the car must stop immediately. It doesn’t have the time to send a signal to the cloud and then wait for a response - it must be able to process the signal NOW.

What are the Benefits of Edge Computing?

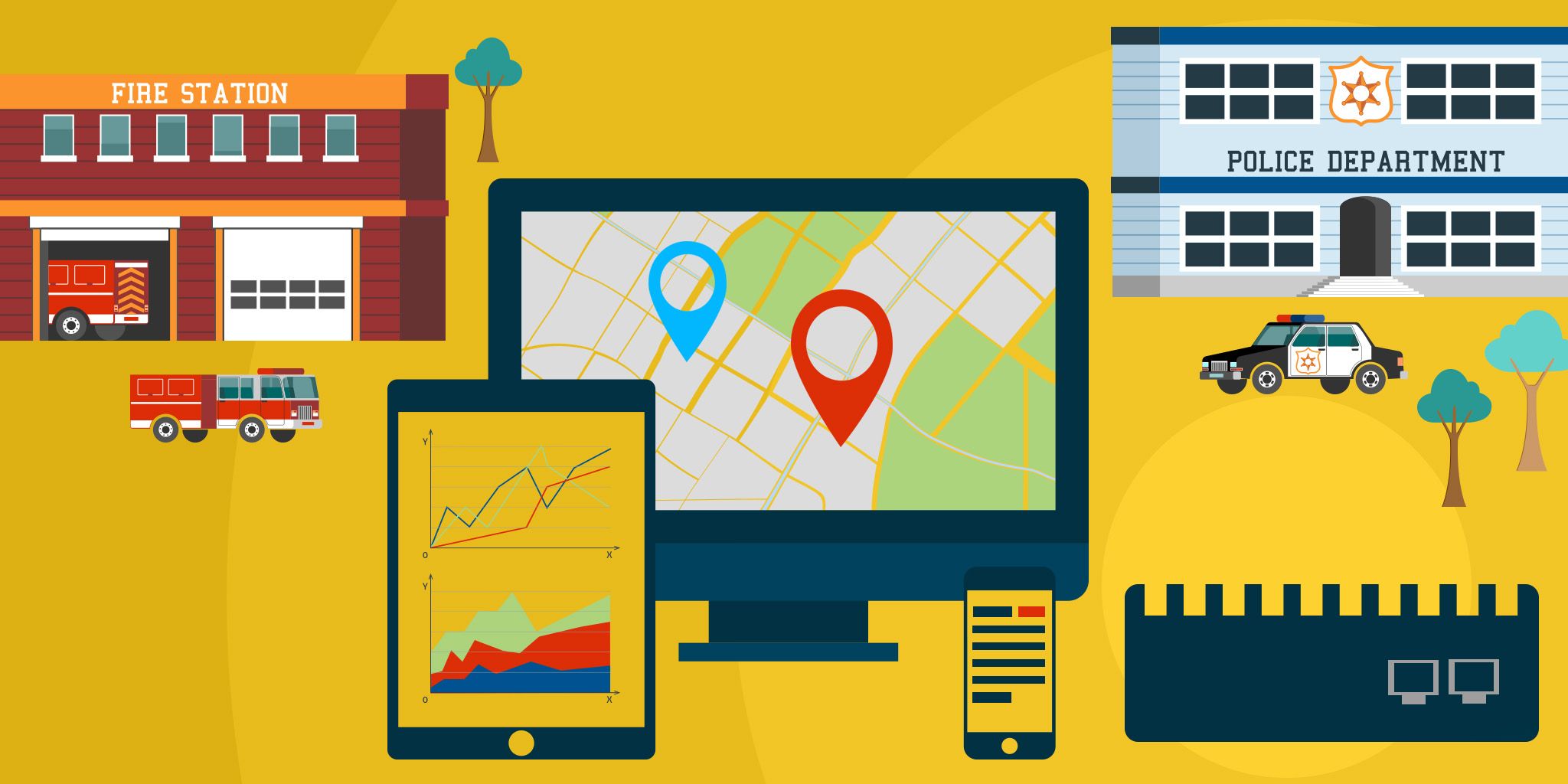

Clearly, speed is a huge factor in using edge computing, and there are plentiful Applications that solve for speed. Factories can use edge computing to drastically reduce the incidence of on-the-job injuries by detecting human flesh. TSA checkpoints can gather data on chemicals coming through different gates that could be combined to create bombs. Cities can use edge computing to address maintenance of roads and intersections before problems occur.

Another big benefit is process optimization. If self-driving cars, factories, and TSA checkpoints were to use the cloud instead of the edge, they’d be pushing all the data they gather up to the cloud. But if the edge makes local decisions, the cloud may not need all that data immediately - or even at all.

With edge computing, data centers can execute rules that are time sensitive (like “stop the car”), and then stream data to the cloud in batches when bandwidth needs aren’t as high. The cloud can then take the time to analyze data from the edge, and send back recommended rule changes - like “decelerate slowly when the car senses human activity within 50 feet.”

In addition to speed and optimization, outage reduction is also a major reason to use edge computing. By pushing everything to the cloud, you’re leaving your business open to ISP failures and cloud server downtime. Many mission critical operations like railroads and chemical plants won’t even use the cloud today. Their own server farms are the only way to guarantee uptime.

Edge computing relies on the connection between individual sensors and a data center in a local area, which drastically reduces the opportunity for outages. Our partner NXP experienced this firsthand at CES when a power outage brought down the internet for the entire conference - except NXP’s computing experience, which was running on the edge.

What’s Next for Edge Computing?

Even with benefits like increased speed, optimization, and outage reduction, adoption of edge computing will need some critical mass. Look at how long cloud adoption took, after all! But over time, businesses will learn how edge computing can speed up operations while reducing the usual risk factors.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles