Google’s Infrastructure Security Design Overview

Google’s Infrastructure Security Design Overview

- Last Updated: December 2, 2024

Yitaek Hwang

- Last Updated: December 2, 2024

After the Dyn DDoS attack back in October, the world saw just how vulnerable our “smart” things were as Netflix, Spotify, and Amazon all went down. As Calum McClelland explored in a post last week, security is absolutely essential and there have been numerous discussions since October on how we can improve security (see Daniel Elizalde’s “How to Protect Your IoT Product from Hackers” for example).

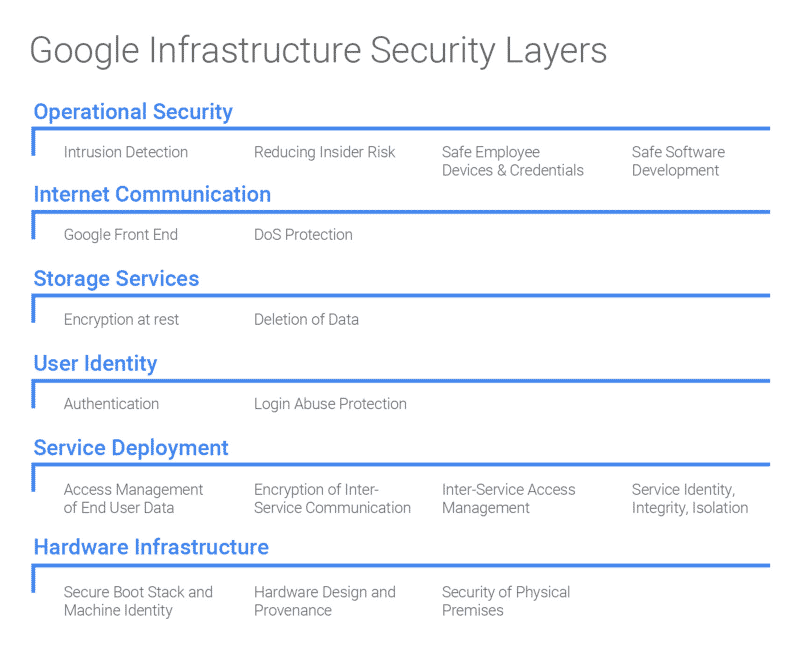

However, sometimes looking at a real-world example helps solidify the concepts. So this week, we will examine Google’s infrastructure security design overview, highlighting the important aspects in each layer of its technology stack and finishing with some key implications and takeaways.

Image Credit: Google Cloud Platform Post

Hardware Infrastructure

Security starts at the hardware level. It’s easy to forget that data is actually stored in physical facilities on thousands of server machines. On top of employee identification protocols (biometric verification, cameras, laser-based intrusion detection), Google also designs custom security chips on its servers to authenticate legitimate hardware devices on-premise. Each machine is also bundled with cryptographic signatures (BIOS, bootloader, kernel, and base OS image) to ensure that each device is using the correct software stack.

Google’s secure microSD card

Service Deployment

At any point, various Google services (e.g. Gmail SMTP server, BigTable storage server, YouTube video transcoder) may be running on thousands of machines, controlled by a cluster orchestration service called Borg. The key takeaway from this section is that “the infrastructure does not assume any trust between services running on the infrastructure.”

For service identity, integrity, and isolation, Google notes that they “do not rely on internal network segmentation or firewalling as [the] primary security mechanism.” This may sound odd with the current focus on network virtualization and micro-segmentation, but we can see Google’s attempt at building a perimeter-less architecture.

Data Storage

Before any data is written to storage, a central key management service encrypts the data, making it difficult to for malicious firmware to hijack the data. Google goes further to track each hard drive throughout its life cycle. A decommissioned storage device cannot leave the facility until it has been wiped out and verified twice independently. Moreover, “devices that do not pass this wiping procedure are physically destroyed (e.g. shredded) on-premise.”

Internet Communication

Google uses a service called the Google Front End (GFE) to protect against DoS attacks and ensures correct termination of TLS connections. GFE provides a “smart reverse-proxy front end” to manage the traffic and identify threats.

Operational Security

Up to this point, all the security measures discussed were designed into the infrastructure. Still, Google needs to actually operate the infrastructure securely to achieve full security. Google provides libraries to eliminate XSS vulnerabilities in web apps as well as other automated tools for detecting security bugs for its developers. Google also monitors access privileges carefully using application-level access management controls.

Implications & Takeaways

One might question, why would Google release its security strategy publicly? Ivan Dwyer from ScaleFT thinks it’s a sales strategy to catch up to AWS in being completely transparent. Regardless, following certain security practices mentioned here may help IoT companies be conscious of the security features from day one. If you are interested, you can read Google’s whitepaper or skim BeyondCorp often mentioned in the released report.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

How Drones and Telecom Enable Aerial IoT

Related Articles