How AI Overcomes the Challenges of Indoor Asset Tracking in Hospitals

How AI Overcomes the Challenges of Indoor Asset Tracking in Hospitals

- Last Updated: December 2, 2024

Cognosos

- Last Updated: December 2, 2024

In IoT applications, AI is most often employed at the “top end” of the data stack – operating on large datasets, often from multiple sources. In a hospital setting, for example, AI and RTLS might be used for predictive analytics: can you predict the rate of ER admissions based on the weather? Can you better estimate when equipment requires maintenance based on usage?

At the “bottom end” of every IoT stack, however, AI is beginning to be applied to the sensors themselves with a very important effect: AI enables low-quality sensors to achieve very high-quality performance, delivering a return on investment that’s been absent in many IoT solutions until now.

AI and RTLS

One application of AI in sensors is in real-time location systems (RTLS). AI and RTLS are employed in many industries to keep track of moving assets to better monitor, optimize and automate critical processes.

A simple example in a hospital is the management of clean equipment rooms - storage rooms spread throughout a hospital where clean equipment is staged for use. A nurse requiring a piece of equipment should be able to find exactly what they need in a clean room.

However, if the clean room stock level is not maintained correctly then equipment might not be available, forcing a lengthy search that impacts patient safety and staff productivity, ultimately forcing hospitals to over-buy expensive equipment (often double) to make sure there is an excess of availability.

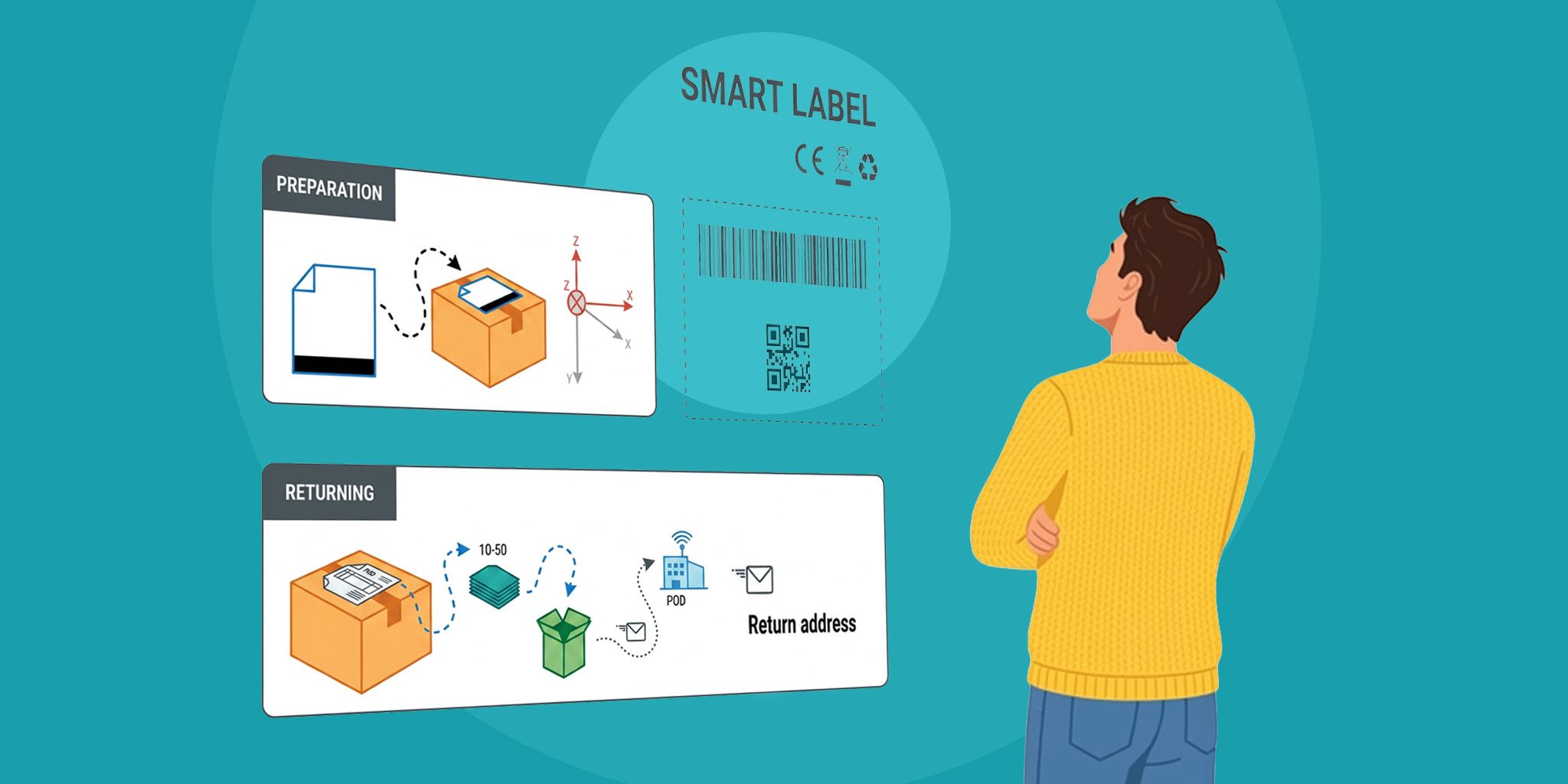

If you could determine the location of equipment automatically, you could easily keep track of the number of available devices in each clean room and automatically trigger replenishment when stock runs low. This is one use of RTLS where the requirement is to determine which room a device is in. Is it in a patient room? Then it’s not available. Is it in a clean room? Then it contributes to the count of available devices.

Determining which room a device is located in with very high confidence is therefore paramount: a location error that makes you think that the three IV pumps you are looking for are in patient room 12 when in fact they are in the clean room next door would lead to a breakdown of the process by over-estimating available pumps.

With RTLS, a mobile tag is attached to the asset, and fixed infrastructure (often in the ceiling or on the walls) determines the location of the tag. Various wireless technologies are used to achieve this, and this is where AI is making a significant positive impact. The technologies used fall into one of two camps:

- Wireless technologies that do not penetrate walls, for example, ultrasound and infrared. Room-level accuracy is achieved by placing a receiver in each room and listening for transmitting mobile tags. If you can hear the tag, it must be in the same room as you. Room-level accuracy is achieved.

- Wireless technologies that do penetrate walls, for example, Wi-Fi and Bluetooth (most often Bluetooth Low Energy or BLE). Receivers are placed throughout the building and measure the signal strength of received tag transmissions to determine the location of the tags algorithmically.

Common Issues

The problems with camp #1—the non-wall penetrating technologies—are manifold. What happens when someone leaves the door open? (A common policy in most hospitals). How do you determine the location of a device when there are no walls? (Equipment is often stored in open areas).

The answer is to add more and more infrastructure devices to the already very costly requirement to place a device in every room, meaning that these solutions quickly become cost prohibitive, and very cumbersome to deploy.

Camp #2 requires a lot less infrastructure and is more appealing from a price standpoint, but there are limitations. Measuring the signal strength received from a single tag at multiple fixed receivers supports a deterministic calculation of tag location. By using generic models for how signal strength drops over distance, a rough range estimate can be made, and three range estimates yield a 2D location estimate. Geofences in software translate those 2D coordinates into room occupancy.

The trouble is that the way signals drop over the range is complex and chaotic, influenced not only by signal blockage (walls, equipment, people), but also by the interactions of multiple signal reflections (“multipath fading”). The net result is that location is determined with an accuracy of 8 to 10 meters or worse—not nearly enough to determine which room an object is in.

Machine Learning

Those with a machine-learning background may have spotted an opportunity: determining which room an object is in is not a tracking problem, but a classification problem. As with all epiphanies, it took a new generation of RTLS companies to step back from their algorithms to see the problem in a new light. It’s here that AI is transforming RTLS.

What if you could leverage the low-cost technologies of Camp #2 to achieve the same level of performance as Camp #1? What if you could deliver all the value without the cost? By leveraging BLE sensors and applying machine-learning this is exactly what AI brings to the party.

Rather than jumping through hoops to make very poor range estimates based on signal strength, why not leverage signal strength as a feature to train a classification algorithm? Since the signals penetrate multiple walls, a single tag can hear signals from several fixed infrastructure devices providing plenty of features to result in a very high confidence inference about room occupancy. The AI is trained once during installation, learning the features sufficient to distinguish Room 1 from Room 2, etc.

This is a fundamental shift in thinking with a very profound outcome. For traditional Wi-Fi and BLE systems, the chaotic signal propagation in buildings creates huge variations in signal strength, confounding range-estimation algorithms.

The result is very poor accuracy, but conversely, that same variation in signal strength from one place to another is exactly the feature variation that makes ML such a powerful tool. The signal propagation features that crush traditional approaches are the exact fodder you need to feed an AI.

RTLS has entered a new era where sophisticated machine learning algorithms running on cloud-sized brains can take a classification approach to object location. The result of AI and RTLS is high-performing, low-cost sensors that are improving critical processes and allowing hospitals to provide better service and achieve better outcomes—all at a lower cost.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles