How Data Marts Are Contributing to the Disruption of Manufacturing Workflows

How Data Marts Are Contributing to the Disruption of Manufacturing Workflows

- Last Updated: December 2, 2024

Philip Piletic

- Last Updated: December 2, 2024

Industry insiders have long referred to the constant data streams sent by connected IoT devices like a fire hose of information. It's tough for even the largest businesses to manage these streams, and it's nearly impossible for small-to-medium-sized firms to do so on their own. Nevertheless, networked sensors have become ubiquitous because they help managers and technicians identify problems in production chains. Data marts, which are designed to meet the needs of certain groups of users specifically, are starting to replace full-fledged data warehouses to assist with managing these flows.

Data marts, designed to meet the needs of certain groups of users specifically, are starting to replace full-fledged data warehouses to assist with managing production chains.

When supply hiccups and parts shortages start to become significant, managers can examine these streams' data to resolve these issues. That's only true, however, if they have some way of processing the information in question. It's getting to the point where people feel that the analytic algorithms run on an average data stream can essentially disrupt the entire industry.

How Analytics are Disrupting Manufacturing Workflows

Technologists might be the only people who consider disruption of an industry to be a good thing, but that's because they use the phrase to refer to new developments that are so influential they completely change the marketplace. Analytics data processing has proven to be particularly disruptive in the manufacturing sector. Various companies of different sizes have taken to collecting data to help them make better decisions. By examining this information, managers have been able to understand the utilization of machinery better.

Wasted time has historically been one of the greatest causes of inefficiencies in the industrial sector. Considering how competitive today's global market is, manufacturing chains can no longer afford to be held back by any significant amount of downtime. Engineers have done their best to design production chains that are as efficient as possible. Still, outside factors often play a role in reducing the overall efficiency of any given operation.

Whether a problem is due to misuse, insufficient downtime coordination, or installation problems, technologists can identify them fairly easily by identifying patterns in raw data streams. However, the information needs to be collected in a repository that makes it easier for each output to have access to it. On top of this, it needs to be stored so that it can't be easily tampered with. Information that's been manipulated in any fashion can't be trusted and therefore shouldn't be trusted.

Since a data mart consists of a specific access pattern used to retrieve client-faced information for a specific group of users, they can help specialists draw insights without having to wade through pages of irrelevant statistics or potentially modified numbers.

Building Data Marts Around Existing Paradigms

Regardless of the type of data being streamed back to the manufacturer or vendor of a particular device, it shouldn't require its operators to reinvent the wheel. Several preexisting services are already available to help streamline development and reduce the amount of coding that anyone given organization has to engage in.

Azure Stream Analytics and IBM's InfoSphere are probably the best known of this. Still, various Apache-based systems have also started to become popular with companies that are particularly concerned about privacy. Any of these storage management systems could be tailored to fit the needs of those deploying a data mart.

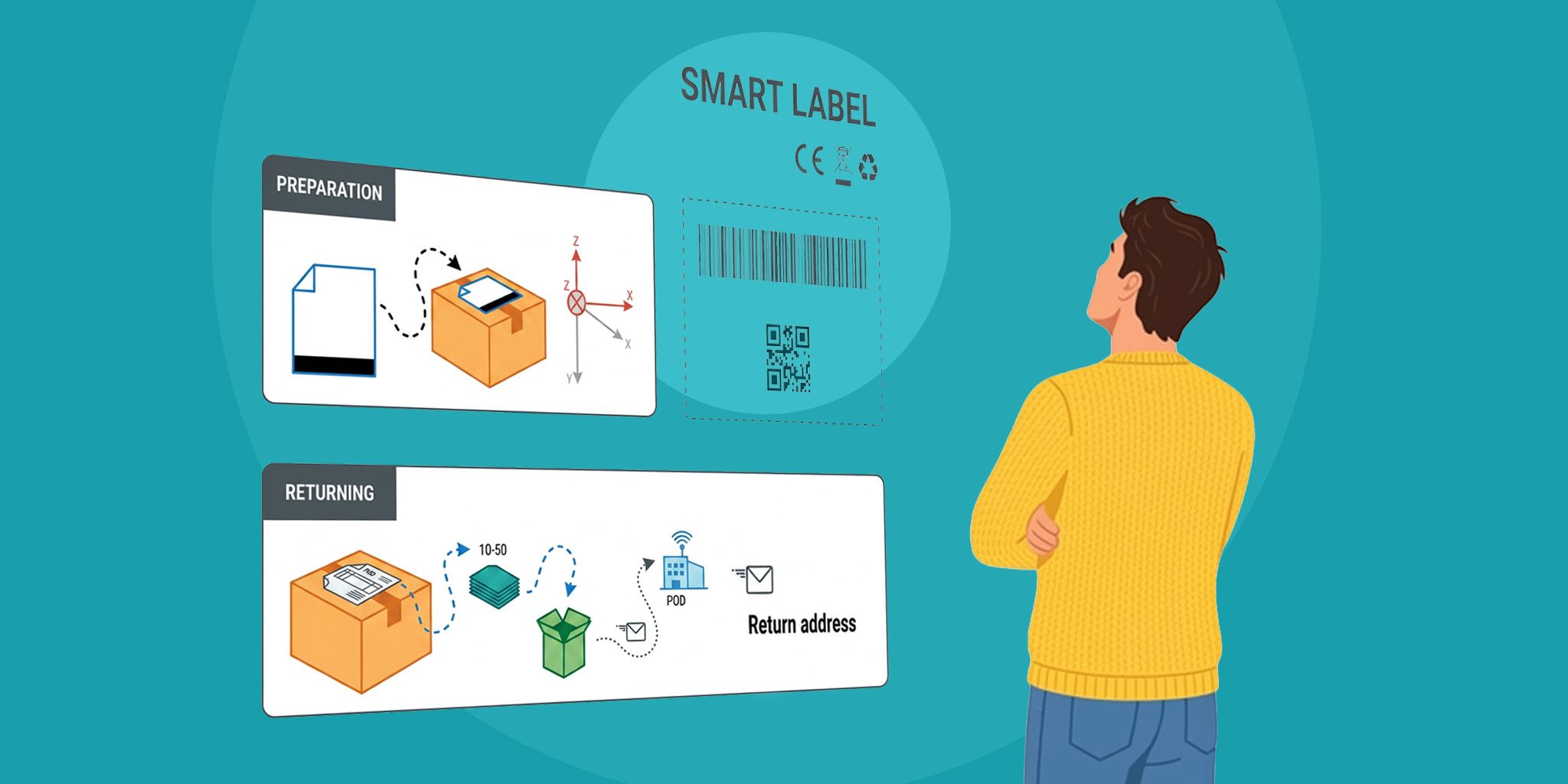

End-users have to be able to interact with their information as well. A smartwatch that monitored health data wouldn't be very practical if it didn't provide at least some way for users to track their fitness progress. A well-designed wearable would have to interface with some Web-based dashboard, which would allow users to see their data and visualize it using some graphic. Even companies that go so far as to develop their own subroutines for polling IoT sensors should still draw data from a single mart.

The same is true of the previous manufacturing sector examples. Technicians who are monitoring multiple data streams related to the health of specific workflows would need to gain an at-a-glance look at this information. At the same time, analytics specialists will eventually want to search for patterns and identify potential issues by processing large amounts of information at a single time.

By constructing a data mart that serves as a single source of truth for all of these various outputs, those who need to conclude sensor outputs can do so without getting bombarded. Separate marts can be safely instantiated for each type of personnel involved in an organization, making them particularly helpful for certain Applications. The best part is that by using these pre-existing frameworks, the total amount of work that has to be done on a first-hand basis will drop dramatically.

Data Mart Instantiation in an Edge Computing Environment

Computer scientists have long been promoting the concept of edge computing along with data governance to address problems related to agility and scalability. Sensors that monitor edge gateway devices send out more packets than almost any other type of IoT module. These represent a high-performance market segment that has thus far been mostly managed directly.

Sending information through massive cloud databases will eventually start to put pressure on existing network infrastructures. Investing in network updates often isn't possible, especially in niche Applications where everything has to be done through copper for whatever reason. Engineers engaged in data mart development have been able to push the frontier further and further by ensuring that sensors actually pass individual packets to one another before reporting to the central repository. That means more information can be processed by machines rather than being shoved through on a push notification.

Local collection, storage, and information processing ensure that individual IoT sensors connected to a data mart only ever share selected findings rather than repetitive telemetry. Naturally, devices attached to a network in this way will have to provide health reports whenever asked by a system administrator. However, this information normally isn't relevant. Thus it can be safely withheld at other times.

Researchers have suggested that one-third of current IoT solutions will be abandoned before they reach a general deployment stage. This is largely due to a lack of planning. Data marts provide managers and administrators with a blueprint to put their systems into play, which means companies who adopt a good standard now will be in a better position to use their gear over the long-term.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles