Project Natick: Underwater Data Centers

Project Natick: Underwater Data Centers

- Last Updated: December 2, 2024

Yitaek Hwang

- Last Updated: December 2, 2024

To take advantage of freely available cool air, we’ve seen Google open a data center in Finland and Facebook open a data center in a Swedish city near the Arctic Circle. Now Microsoft wants to build one underwater to follow suit. Not only do they argue that this plan is feasible, they think it could also “reduce construction costs, make it easier to power these facilities with renewable energy, and even improve their performance,” according to Sean James, a Microsoft engineer.

Before validating James’s claims, putting a data center underwater comes with a myriad of challenges:

- The container must stay dry.

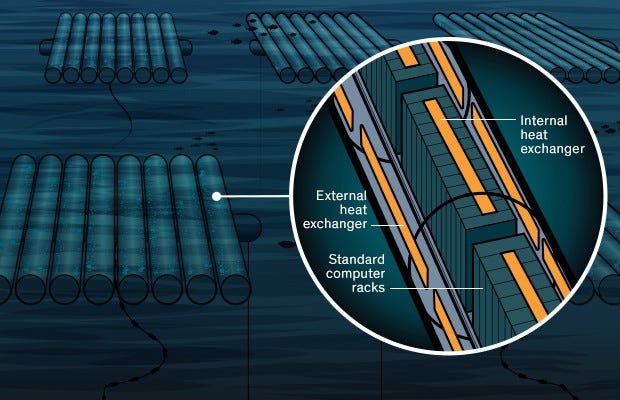

- Seawater should cool the servers efficiently.

- Containers must be free from barnacles and other sea life that may inhibit the cooling process.

Why go through all this trouble to build something underwater, when you can build it in Finland or Sweden like the other tech giants? To this end, James postulates several advantages other than the cooling factor.

First of all, the Microsoft team argues that building underwater pods avoids the hassle that comes with constructing data centers on land. Today, companies must deal with building codes, taxes, electricity supply, and network connectivity in other countries before putting in the rack of servers.

On the other hand, the ocean provides a relatively uniform environment for these underwater pods. These pods can be made almost on demand (instead of planning it out and negotiating with governments and landowners long before) and deploy at any coastal site with little customization. Microsoft’s goal is to deploy these pods within 90 days from purchase.

Microsoft also points to the remote locations of these data centers as a limitation in how fast these servers can respond to requests. They cite that almost half the world’s population lives within 100 kms of the ocean, concluding that bringing these pods closer to where we live will add speed benefits.

Lastly, Microsoft mentions that many data centers use evaporation to cool the surrounding air, consuming more water. In Microsoft’s case, they’ll be using surrounding water to transfer the heat from the air out, so it will not be “consuming” water in a non-renewable manner. Even at intermediate depths between 10–200m, the water remains between 14–18 °C, making it an ideal environment for cooling data centers.

In fact, Microsoft’s pilot pod, named Leona Philpot, successfully showed that the submerged pods could keep temperatures low using lower energy overhead than mechanical cooling or free-air approaches.

There’s still some work left to be done. While Natick’s current cooling mechanism is economical and efficient for standard servers, it might need more exotic approaches (using dielectric liquids or high-pressure helium gas) to cool down more intensive servers. Also, keeping off ocean creatures is a challenge as barnacles could disrupt the outflow of heat from these data centers.

Lastly, while Microsoft underplays the environmental impact, quoting that “the water just meters downstream of a Natick vessel would get a few thousandths of a degree warmer at most,” a long term study might be required to observe the effects of thermal pollution due to submerged pods.

If Microsoft figures out a way to safely deploy these pods without destroying the environment, this might be the most significant advance in data center cooling since DeepMind’s AI reduced its footprint by 40% last year.

You can read the original IEEE issue online or in print via “Dunking the Data Center” in the March 2017 issue.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles