Beyond Traditional Security: Protecting Data in Autonomous AI Systems

Beyond Traditional Security: Protecting Data in Autonomous AI Systems

- Last Updated: April 16, 2026

Ravi Rao

- Last Updated: April 16, 2026

The expansion of artificial intelligence (AI)-driven systems is redefining the scope of big data security. As organizations scale their use of autonomous agents and machine learning (ML) pipelines, they face a new class of threats. Distributed denial-of-service (DDoS) attacks now target inference endpoints and orchestration layers, while data leaks emerge from overlooked vectors. The security challenge is no longer limited to protecting data at rest or in transit. It now extends to protecting data while AI systems are processing it, requiring organizations to secure both the information and the operations that manipulate it. Addressing these risks requires a combination of architectural foresight, real-time behavioral monitoring, and strict access governance. Without these guardrails, system complexity can outpace an organization’s ability to contain threats.

The new threat environment: AI-driven vulnerabilities

Autonomous AI systems open entirely new frontiers for cyberattacks. Vectors that didn’t exist a few years ago are now vulnerabilities. Attackers increasingly exploit the logic and autonomy of AI agents, targeting the ways to act upon data. These risks materialize in several forms, including:

- Prompt injection, model manipulation, and model inversion. These represent some of the most immediate risks. Much like structured query language (SQL) injection, prompt injection manipulates the instructions given to AI models to change their behavior. Seemingly harmless requests can consume system resources. A cybercriminal could write prompts that force a model into endless computation loops or trick it into executing destructive commands. Real-world attacks have demonstrated how cybercriminals embed hidden instructions in text, links, or documents that cause large language models to disclose confidential information or bypass safeguards. Model inversion, by contrast, exploits queries to reconstruct sensitive training data, effectively turning the model into a data-leakage vector.

- Multi-agent vulnerabilities. As more AI agents share information to solve complex tasks using the model context protocol (MCP), the vulnerabilities of multi-agent systems compound the risks. For example, an AI-powered support agent might use MCP to query both a customer relationship management (CRM) and a logistics platform to resolve an issue. If the logistics agent is compromised, a hacker could exfiltrate data from the CRM. While powerful, these interactions create opportunities for data leakage or corruption. A compromised agent can spread that vulnerability across the system, magnifying the impact.

- DDoS attacks. DDoS attacks on AI pipelines can overwhelm inference endpoints. Attackers deploy armies of bots to bombard AI application programming interfaces (APIs) with spurious requests, consuming compute resources until legitimate users are locked out. Security firms have documented cases of large-scale bot-driven floods designed to overload AI inference services.

- Shadow AI tools. Employees use unvetted AI applications without IT oversight, creating governance blind spots. These unsanctioned tools bypass organizational safeguards, making it nearly impossible to enforce consistent security policies.

These risks are amplified by human error, such as when employees unintentionally expose sensitive data to an AI agent. Taken together, these developments mark a decisive shift. Protecting modern data environments requires defending against theft or corruption while securing the operations of autonomous systems that interpret and act on that data.

Zero trust architecture for autonomous systems

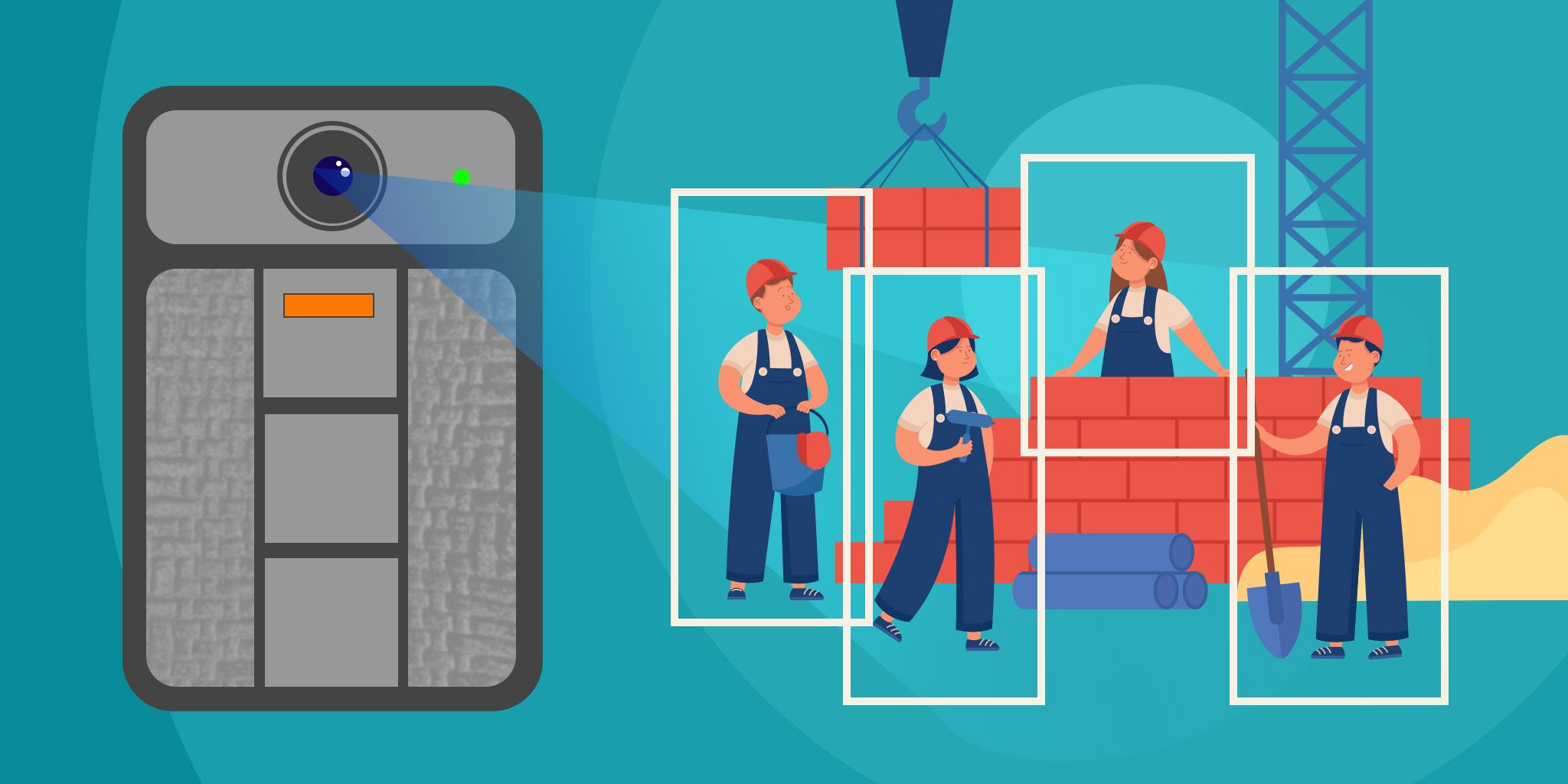

As AI systems gain autonomy, intelligent agents are adopting the zero trust model as the foundation for protecting data. With interactions strictly monitored, a zero trust approach creates proactive security frameworks that can anticipate emerging threats. Zero trust for autonomous systems involves several layers:

- Continuous authentication of AI agents. Subject AI agents to ongoing identity and session validation, with tokens and credentials reverified at each critical action. This approach prevents compromised or rogue agents from persisting undetected and ensures only legitimate agents can access sensitive workflows or data.

- Granular role-based access control (RBAC). Assign AI agents the minimum privileges necessary for their function, using fine-grained roles and permissions. Limit data and system access to what is strictly required, reducing the blast radius if an AI agent is compromised or manipulated.

- Geographic and behavioral anomaly detection. If an AI agent authenticates in New York and seconds later requests access from Europe, that’s a red flag. Continuously monitor AI agent activity for deviations in geography or usage, such as unusual data requests. Flag and respond to anomalies to quickly detect and contain potential exploitation or lateral movement.

- Trusted network enforcement. Restrict AI agent communications to trusted, segmented networks, such as a virtual private network (VPN) or private subnets. Block public internet access for sensitive operations, minimizing exposure to external threats so only authorized internal endpoints can interact with critical data and systems.

Without strict, layered access management, a single rogue agent or compromised connection can propagate errors or policy violations across an enterprise. Analyst surveys show that 68% of organizations have experienced data leakage incidents tied to AI tools. Yet, only 23% have formal security protocols in place, highlighting how inadequately secured AI use can lead to harmful data exposure.

Data governance and policy framework

Governance and oversight are necessary to guide the responsible use of AI systems. For organizations deploying autonomous agents, it’s critical for technical safeguards to be robust and adaptive.

Data classification should have strict labels, such as public, private, or restricted access, with enforcement down to the column level, programmatically limiting AI agents’ access. Granular RBAC enforces least privilege, preventing users and agents from exceeding their scope or escalating privileges.

With hackers using AI tools to compromise AI agents, it’s vital for policy enforcement to be dynamic and real-time. Prompt shields can block destructive or unauthorized commands, while continuous monitoring detects and responds to anomalous or policy-violating behavior. Governance platforms can automate and standardize these controls across systems.

Governance is not just technical. Delegating decision-making to AI agents introduces ethical dilemmas. It’s imperative for organizations to recognize the responsibility that comes with this power, remain vigilant against negative consequences, and ensure that trust, transparency, and accountability are at the core of their autonomous systems.

Real-time monitoring and threat detection

AI-driven systems and their defenses need to remain dynamic. Real-time monitoring is critical to detect misuse or anomalies before they escalate into full-scale breaches. AI-powered anomaly detection tools can flag behavior deviations, such as excessive API calls or mass file dumps. Subscription abuse is another telltale sign. Attackers often create hundreds of free trial accounts to bypass throttling, thereby overwhelming inference endpoints.

Key performance indicators (KPIs) help measure the effectiveness of monitoring systems. Metrics like time to detect and time to respond show how quickly security teams contain incidents, while tracking attempted data leaks provides a measure of ongoing risk exposure. Audit trails enhance resilience by recording exactly who or what accessed sensitive data, supporting both forensic investigations and compliance obligations. Where feasible, organizations can implement automated response mechanisms that quarantine suspicious agents or cut off anomalous traffic in real time.

Resilience through responsibility

The emergence of AI-driven big data systems embodies a fundamental truth: with great power comes great responsibility. Organizations wielding these capabilities are data stewards that have ethical and legal duties regarding how they use and protect data. They remain liable for any errors, policy breaches, or unauthorized data access caused by these AI agents and their human handlers. Their obligation extends beyond prevention to include recovery mechanisms and adapting security measures as new AI protocols and threats emerge. Maintaining resilience is just as important as shoring up resistance. The path forward demands unwavering vigilance from leadership and users alike.

Ravi Rao is a principal software engineer with more than 14 years of experience delivering enterprise-scale, AI-integrated cloud platforms. His expertise spans architecture, design, deployment, and security, driving modernization across SaaS and PaaS ecosystems. Ravi has led AI-first initiatives, advancing secure development practices, performance engineering, and privacy-aware systems. He is recognized for his technical leadership and innovation in building resilient, scalable, and future-ready platforms. Connect with Ravi on LinkedIn.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles