Yann LeCun’s Keynote on Predictive Learning, Mixed Reality as Imagined by Magic Leap, and Marc Andreessen’s Thoughts on 5.5 Million Lost Jobs

Yann LeCun’s Keynote on Predictive Learning, Mixed Reality as Imagined by Magic Leap, and Marc Andreessen’s Thoughts on 5.5 Million Lost Jobs

- Last Updated: December 2, 2024

Yitaek Hwang

- Last Updated: December 2, 2024

Yann LeCun NIPS 2016 Keynote: Predictive Learning

The Thirtieth Annual Conference on Neural Information Processing Systems (NIPS) 2016 concluded on Sunday (Dec 10), showcasing new advancements in machine learning and computational neuroscience. For excellent reviews of each day of the conference, check out Karl N.’s piece on LAB41.

The focus of this week’s post is the keynote speech by Yann LeCun, the Director of AI Research at Facebook, on predictive learning. Are Generative Adversarial Networks (GANs) really the most important innovation in machine learning right now or are we approaching unsupervised learning the wrong way?

Summary:

- The keynote begins with a high-level overview of machine-learning, focusing on ConvNets and Deep Learning in general. He notes that current AI systems are limited in that they can’t understand the real world. These systems lack common sense and the ability to predict based on an understanding of the natural world.

- LeCun admits that while supervised ConvNets have made significant progress, we need memory-augmented networks to give machines the ability to reason. He also points to the shortcoming of reinforcement learning with weak learning signals given sparse rewards, concluding that reinforcement learning is effectively only useful in game engines and not real life.

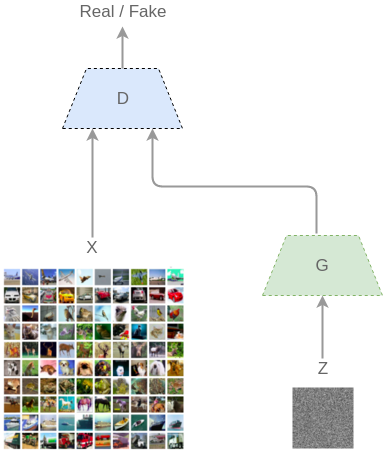

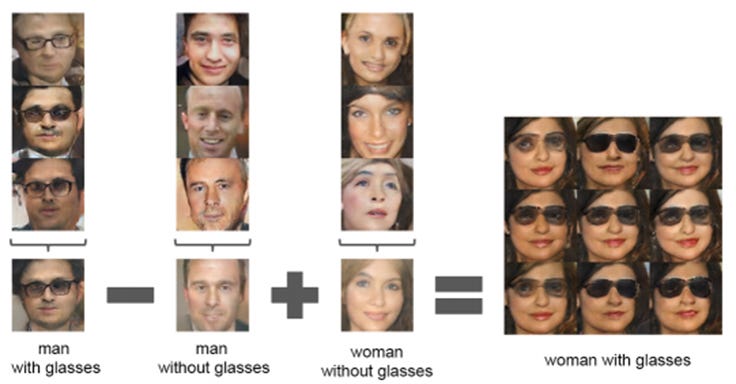

- He then points to adversarial training, specifically GANs introduced by Ian Goodfellow in 2014, as the solution to help machines predict the world better. GANs consists of a generator and a discriminator that learns simultaneously. The generator starts from noise and generates outputs, and the discriminator learns if the output comes from the same distribution of the training set. You can read more about Deep GANs or other extensions on Tryolab’s summary of unsupervised learning advances in 2016.

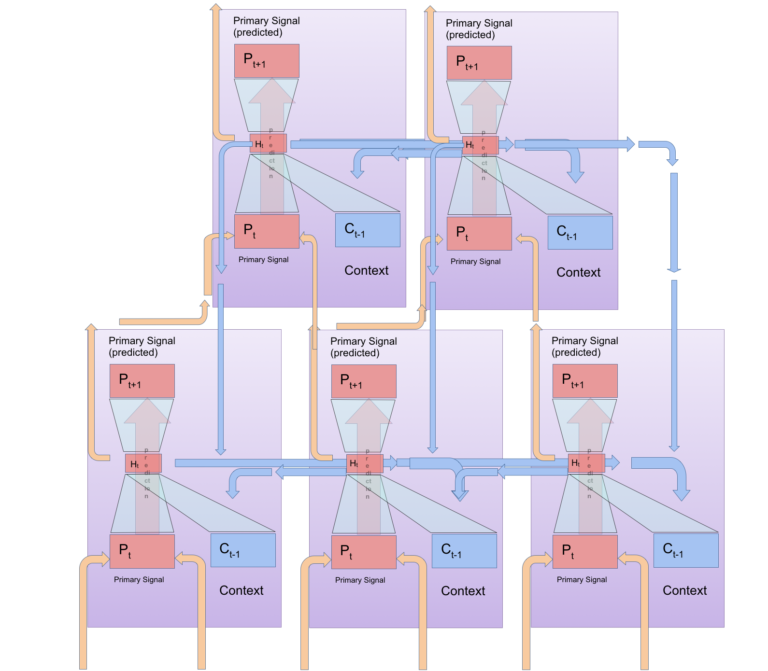

- LeCun’s ideas are contested by Filip Piekniewski who argues that current networks don’t capture observed dynamics. The proposed Predictive Vision Model employs a fully recurrent system to build up predictions at the lowest level. In contrast to LeCun et al. where images are reconstructed from a laplacian pyramid, PVMs arranges associative memories in a hierarchical, fully-recurrent way to capture multi-scale interactions. You can read the full paper or read a summary on his blog.

Takeaway:

As Piekniewski points out, Yann LeCun considers unsupervised and predictive forward model building as the hill we’re facing right now. And there may be hills behind it that we can’t see yet in our quest to build AI-complete.

Whether you agree with LeCun or Piekniewski, the focus right now is on giving machines some notion of memory to build a better predictive system (note DeepMind’s Differential Neural Computers introduced in LWITF V5.0). Facebook has been actively publishing its findings with different GAN implementations so far, so we will see if GANs or PVMs will take us to the next level in unsupervised learning.

Bonus: NIPS 2016 Presentation Slides, Video on the same topic given at CMU, Plug & Play Generative Networks

From VR to AR, and now Mixed Reality (MR)

The company that raised an astonishing $793.5 million in what might be the largest C round in history isn’t located in Silicon Valley or New York or even Seattle/Austin/Denver.

This ultra-secretive company called Magic Leap is in Fort Lauderdale, Florida, developing a Mixed Reality (MR) system to bring science fiction to life. The VR market is already brimming with big name players from low-end devices like Samsung Gear or Google Cardboard to high-end sets like the Oculus Rift and HTC Vive.

But MR is different than VR or even AR in that virtual objects are added onto the real world. Magic Leap, and its impressive list of backers (Google, Andreessen Horowitz, KPCP), believe that MR will redefine our experience with virtually-enhanced systems.

Summary:

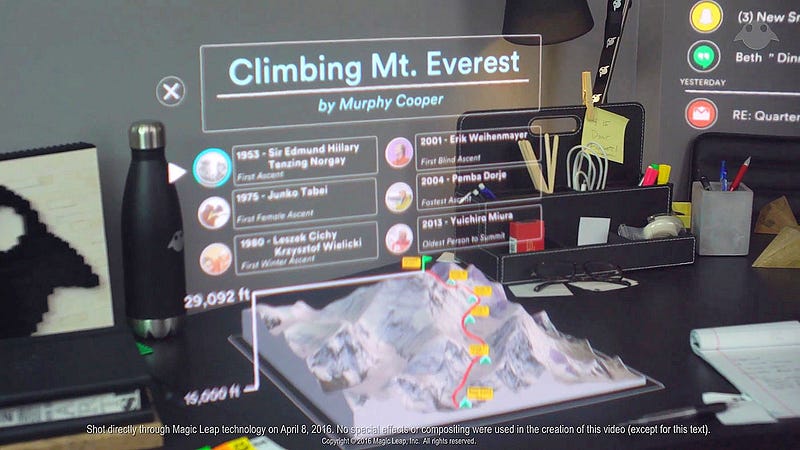

- Magic Leap’s founder, Rony Abovitz, believes that his company is building “the internet of presence and experience.” Current high-grade VR systems integrate 3D audio, impressive visuals, and even sense of touch into creating a dynamic virtual experience. While Magic Leap has yet to reach that goal, its visuals are beyond what other competitors can offer.

- With a biomedical engineering degree and having sold his surgical robot company Mako for $1.65 billion in 2013, Abovitz realized that VR and MR are symbiont technology. Better experience meant hacking both biological circuits and silicon chips to connect the peculiarities in our senses.

- One of the major problems with current VR systems is creating a realistic portrayal of depth. Even if the VR headsets are trying to generate an illusion of an object far away, the eyes are focused on the tiny screen inches away. Likewise, if the virtual object is close, the eyes should naturally cross to focus on closer objects, yet with VR headsets, they are staring straight ahead to the screen. Magic Leap creates an illusion to trick the eye to converge or diverge at correct distances, relieving an optical discomfort.

- Magic Leap also projects light into the eye instead of having it displayed on the screen, reflecting the image into the eyes by the beam-splitting nano-ridges. This reduces pixelation and generates a smoother, more realistic display. While resolution is constantly being improved, Magic Leap’s focus on hacking our natural senses may prove to be an advantage over other MR players like Microsoft’s HoloLens and Meta.

Takeaway:

The mass adoption of artificial reality driven by VR, AR, or MR is still far away. All of these systems require constant technical maintenance, not to mention the cultural stigma, safety concerns, narrow field of view, and incomplete experience left by interface issues. But as Magic Leap, along with others, begin to make virtual objects responsive to physical objects, we can’t help but wonder what the future will hold. There is incredible potential to transform healthcare, education, and entertainment.

You can read the full story at Wired.

Quote of the Week

Q: You mentioned having the answer to this question, which you get a lot of criticism for, particularly on Twitter, about what happens to the 5.5 million jobs [with the introduction of self-driving cars]? What is your answer to that for how the economy gets remade?

A: The first-order answer to the question is the US economy, just this quarter, will destroy over 5 million jobs normally. The US economy is like the duck: it looks calm on the top and is paddling furiously underneath. We will destroy and re-create over 5 million jobs just this quarter, and we will do it again in Q1 of ’17, and we’ll do it again in Q2 of ’17, and we’ll do it again in Q3 of ’17. Five and a half million jobs get reallocated over the next five years — it sounds like a lot, but it’s a drop in the bucket compared to the overall level of change happening in the economy.

The other part of the answer is dynamism. The rate of gross creation and destruction of jobs, economists call that dynamism. Would you believe that the rate of dynamism, which is to say the absolute size of growth creation and destruction of jobs in the US economy, has been rising continuously? Has the rate of change been rising continuously for the last 40 years or falling continuously? What the numbers show is the US economy is becoming less dynamic over time, not more dynamic. The same thing on startups. The rate of startup formation in the US has been declining for 40 years. Everybody thinks it’s been rising; it’s been declining for 40 years.

Marc Andreessen is a well-known optimist in Silicon Valley. Andreessen points to his unique job as a VC dealing with hopeful founders for this optimism. His positivity is a fresh respite from the overwhelming amount of negative press in a year dubbed the “worst year ever in history” by Slate.

Andreessen dismisses the tech alarmist view of self-driving cars displacing over 5.5 million professional driving jobs in the US over the next five years. Like others working on this technology, he immediately points to the productivity improvements that will come with not having to physically drive the car anymore: less parking lots, more productive time during the commute, and its ripple effects in related industries (delivery, motel, retail, etc). It’s easy to attribute his optimism and excitement for self-driving cars to his privilege — and rightfully so. But there is also some truth to his statements.

First, autonomous vehicles won’t displace people immediately. Autonomous driving in cities present a huge challenge for computers to solve and for the most part will still require some input from a human driver. Andreessen compares this to autopilot capabilities that we have with airplanes; we still have pilots despite such technology. In essence, even if self-driving cars are inevitable, it will take time. Secondly, Andreessen also points to industries where the rate of innovation has been slow: healthcare, construction, childcare, and senior care. These markets also make up most of the GDP. What we may lose with self-driving cars, we may see more opportunities in these sectors. Andreessen is at least spurring a conversation to use such technologies as a “force for good” and “counteract the economic divide we create” as Hannah White suggests in her Medium post on Amazon Go’s effects on retail jobs.

The Rundown

- R vs. Python for data science—Domino Data Lab

- Fighting deceptive UI — Dark Patterns

- Machine Learning for everyday tasks — mailgun

- Understanding Amazon States Language — States-Language.net

- Filter the web by hacker news — HackerNews

- Talks that changed the way I think about programming — Oliver Powell

- Wearable device to help Parkinson’s patients to write again — Microsoft

Resources

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles