Ambient CV Technology in a New Generation of IoT Systems

Ambient CV Technology in a New Generation of IoT Systems

- Last Updated: August 7, 2025

Synaptics

- Last Updated: August 7, 2025

Computer vision (CV) technology today is at an inflection point, with major trends converging to enable what has been a cloud technology to become ubiquitous in tiny edge AI devices that are optimized for specific uses, and typically are battery-powered.

Technology advancements that address specific challenges that allow these devices to perform sophisticated functions locally in constrained environments – namely size, power, and memory – are enabling this cloud-centric AI technology to extend to the edge, and new developments will make AI vision at the edge pervasive.

Understanding the Technology

CV technology is indeed at the edge and is enabling the next level of human-machine interfaces (HMIs).

Context-aware devices sense not only their users but also the environment in which they operate, all to make better decisions toward more useful automated interactions.

For example, a laptop visually senses when a user is attentive and can adapt its behavior and power policy accordingly. This is useful for both power saving (shuts down the device when no user is detected) as well as security (detect unauthorized users or unwanted "lurkers") reasons, and to offer a more frictionless user experience. In fact, by tracking on-lookers’ eyeballs (on-looker detection) the technology can further alert the user and hide the screen content until the coast is clear.

Another example: a smart TV set senses if someone is watching and from where then it adapts the image quality and sound accordingly. It can automatically turn off to save power when no one is there. An air-conditioning system optimizes power and airflow according to room occupancy to save energy costs.

These and other examples of smart energy utilization in buildings are becoming even more financially important with hybrid home-office work models.

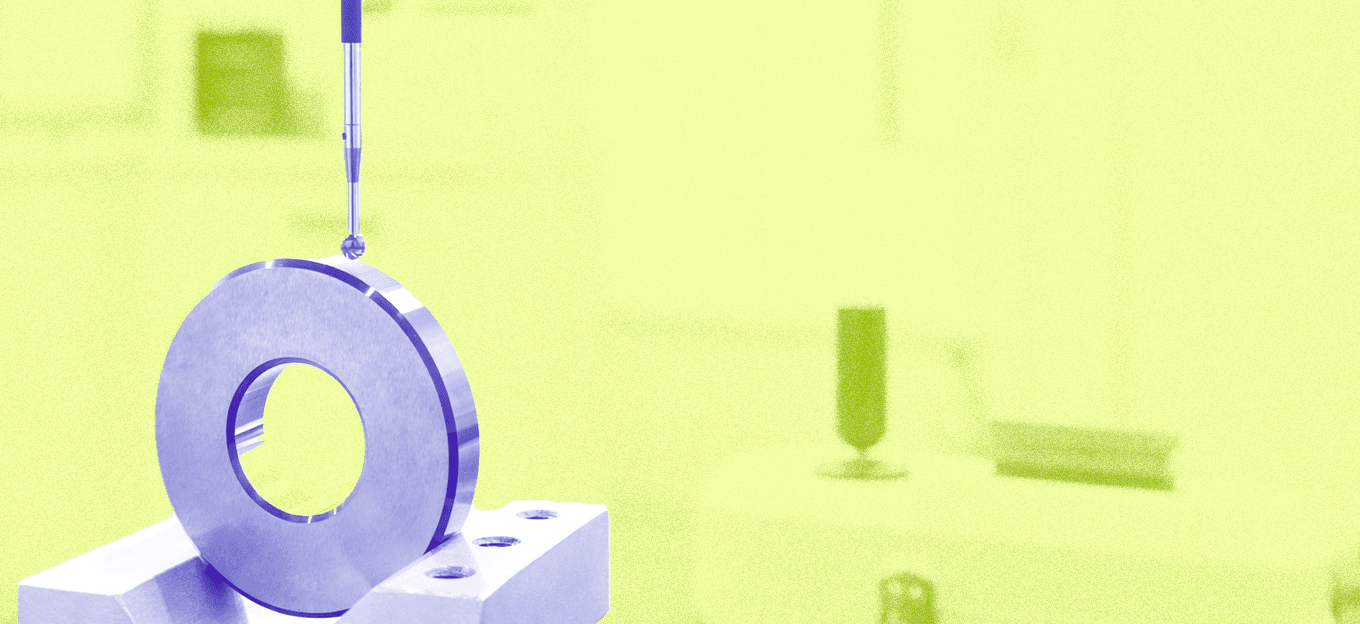

Not only limited to TVs and PCs, this technology plays a crucial role in manufacturing and other industrial uses, too, for tasks such as object detection for safety regulation (i.e., restricted zones, safe passages, protective gear enforcement), predictive maintenance, and manufacturing process control. Agriculture is another sector that will greatly benefit from vision-based contextual awareness technology: crop inspection and quality monitoring, for example.

Applications of Computer Vision

Advancements in deep learning have made possible many amazing things in the field of computer vision. Many people are not even aware of how they are using CV technology in their everyday lives. For example:

- Image Classification and Object Detection: Object detection combines classification and localization to determine what objects are in the image or video and specify where they are in the image. It applies classification to distinct objects and uses bounding boxes. CV works via mobile phones and is useful in identifying objects in an image or video.

- Banking: CV is utilized in areas like fraud control, authentication, data extraction, and more to enhance customer experience, improve security, and increase operational efficiency.

- Retail: The development of computer vision systems to process this data makes the digital transformation of the real industry much more attainable, e.g., self-checkout.

- Self-Driving Cars: Computer vision is used to detect and classify objects (e.g., road signs or traffic lights), create 3D maps or motion estimation, and play a key role in making autonomous vehicles a reality.

CV at the Edge

The trend toward ubiquitous ML-based vision processing at the edge is clear. Hardware costs are decreasing, computation capability is increasing significantly, and new methodologies make it easier to train and deploy smaller-scale models that require less power and memory. All of this is leading to fewer barriers to adoption, and to increased use of CV technology AI at the edge.

But even as we see increasingly ubiquitous tiny-edge AI, there is still work. To make ambient computing a reality, we need to serve the long tail of use cases in many segments that can create a scalability challenge.

In consumer products, factories, agriculture, retail, and other segments, each new task requires different algorithms and unique data sets for training. Solution providers offer more development tools and resources to create optimized ML-enabled systems that meet specific use case requirements.

TinyML

A key enabler for implementing all types of AI at the Edge is TinyML. This is an approach to developing lightweight and power-efficient ML models directly on edge devices by utilizing compact model architectures and optimized algorithms.

TinyML enables AI processing to occur locally on the device, reducing the need for constant cloud connectivity. In addition to consuming less power, TinyML implementations deliver reduced latency, enhanced privacy and security, and lower bandwidth requirements.

Moreover, it empowers edge devices to make real-time decisions without relying heavily on cloud infrastructure, making AI more accessible and practical in various applications, including smart devices, wearables, and industrial automation. This helps address the feature gaps and enables AI companies are up-level the software around their NPU offerings by developing rich sets of model examples—” model zoos”—and applications reference code.

In doing so, they can enable a wider range of applications for the long tail while ensuring design success by having the right algorithms optimized to the target hardware to solve specific business needs, within the defined cost, size, and power constraints.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles