A New Era of Automation and Efficiency in Manufacturing

A New Era of Automation and Efficiency in Manufacturing

- Last Updated: December 2, 2024

Tim Vehling

- Last Updated: December 2, 2024

The original Industrial Revolution in the 18th and 19th centuries completely reshaped society, changing the way things were manufactured, how people worked and how people lived. The Second Industrial Revolution, which kicked off in the late 19th century, brought about even more advancements thanks to the rollout of electricity and railroad networks. However, what came to define the modern age was the digital revolution – or the Third Industrial Revolution – which began in the latter part of the 20th century. This was the beginning of the Information Age, where computers have become pervasive.

Beyond the smart home, AI and other technologies are driving digital transformation across almost every industry.

Fourth or Fifth Industrial Revolution

There’s some debate about whether we’re in the Fourth Industrial Revolution or if society has already progressed to the Fifth Industrial Revolution. Either way, the stage we’re in now is defined by vast amounts of computing power and the exciting possibilities of cutting-edge technologies like AI, ML, and the IoT. It’s easy to take these advancements for granted when integrated into so many facets of our lives. In the average American home, you’ll likely find AI-powered devices such as a smart TV, video doorbell, and smart speaker, not to mention the ever-present smartphone that we always have by our side. Beyond the smart home, AI and other technologies are driving digital transformation across almost every industry.

Manufacturing

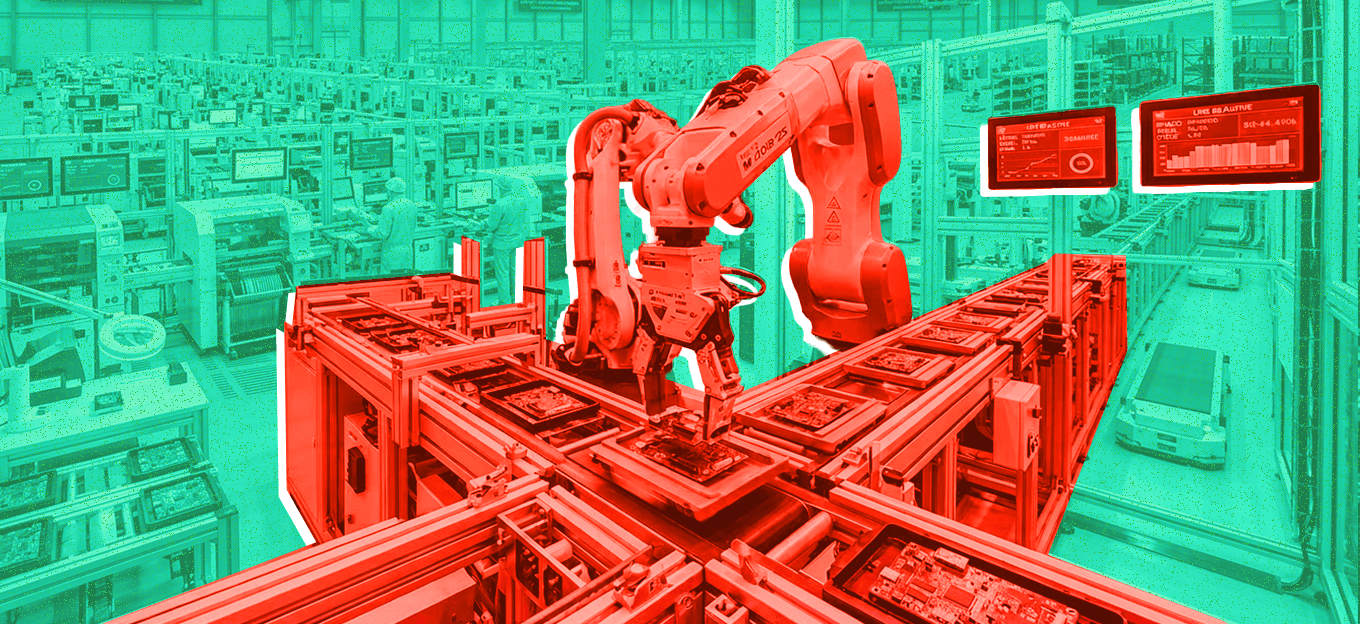

The manufacturing industry is a prime example of how technological advancements like AI and computer vision (CV) are shaking things up in the commercial sector. Many factories – which have already become very automated over the past few decades – have embraced computer vision (CV) applications to increase efficiency further and drive down costs. CV makes it possible for a machine to inspect hundreds or thousands of parts per minute with much greater reliability than humans. Machines can also examine products with extreme precision to ensure that products meet particular parameters, such as height, length, width, etc.

AI

It is fascinating to see how many AI-powered robots work side by side with humans. This type of collaborative robot, or cobot, combines the skills and intelligence of machines and humans to offer the best of both worlds. Cobots can increase productivity and help keep people safe by doing more strenuous tasks and freeing up workers’ time so they can focus on more exciting tasks instead of monotonous and repetitive ones.

Advancements in edge-AI processing have paved the way for today’s AI robots and will open up new possibilities for robots in the future. Intelligent robots have to process tons of information in real-time, so it’s much more efficient for these machines to process data at the edge instead of sending data to the cloud and back. Traditionally it’s been challenging to develop processors that can meet the compute requirements of robots. CV applications require very high performance and low latency, but it can be equally important to minimize power consumption.

Analog computing technology combined with flash memory is helping to solve the challenges of AI processing at the edge. Analog compute combined with flash memory enables powerful processing while driving down silicon costs by up to 20x and increasing power efficiency by 10x. It will be interesting to see how manufacturers leverage potent analog compute solutions to develop even more innovative AI machines and processes that we can’t even imagine today.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles