Digital Twin Strategy: A C-Level Roadmap to Implementation and Scale

Digital Twin Strategy: A C-Level Roadmap to Implementation and Scale

- Last Updated: May 6, 2026

Mehul Rajput

- Last Updated: May 6, 2026

There's a quiet shift happening in boardrooms across industries. CEOs, CTOs, and COOs are no longer asking "What is a digital twin?" They're asking something more pressing: "How do we build one that actually moves the needle?"

That's the right question. And it's a question that deserves a real answer, not a vendor pitch dressed up as strategy.

Digital twins have moved well past the proof-of-concept phase. Organizations that once experimented with virtual replicas of machines or factory floors are now scaling the technology across entire supply chains, enterprise infrastructure, and customer experience systems.

But the gap between organizations doing it well and those spinning their wheels is widening, and that gap is almost always a strategy problem, not a technology problem.

What a Digital Twin Actually Is (And What It Isn't)

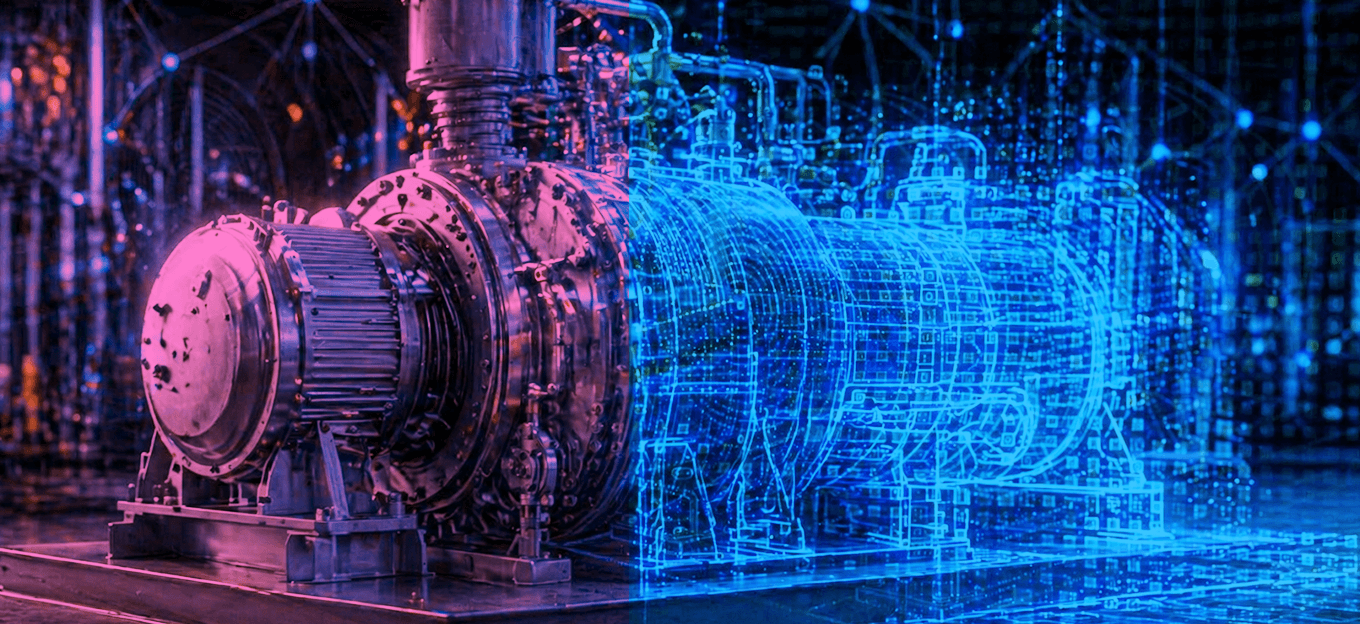

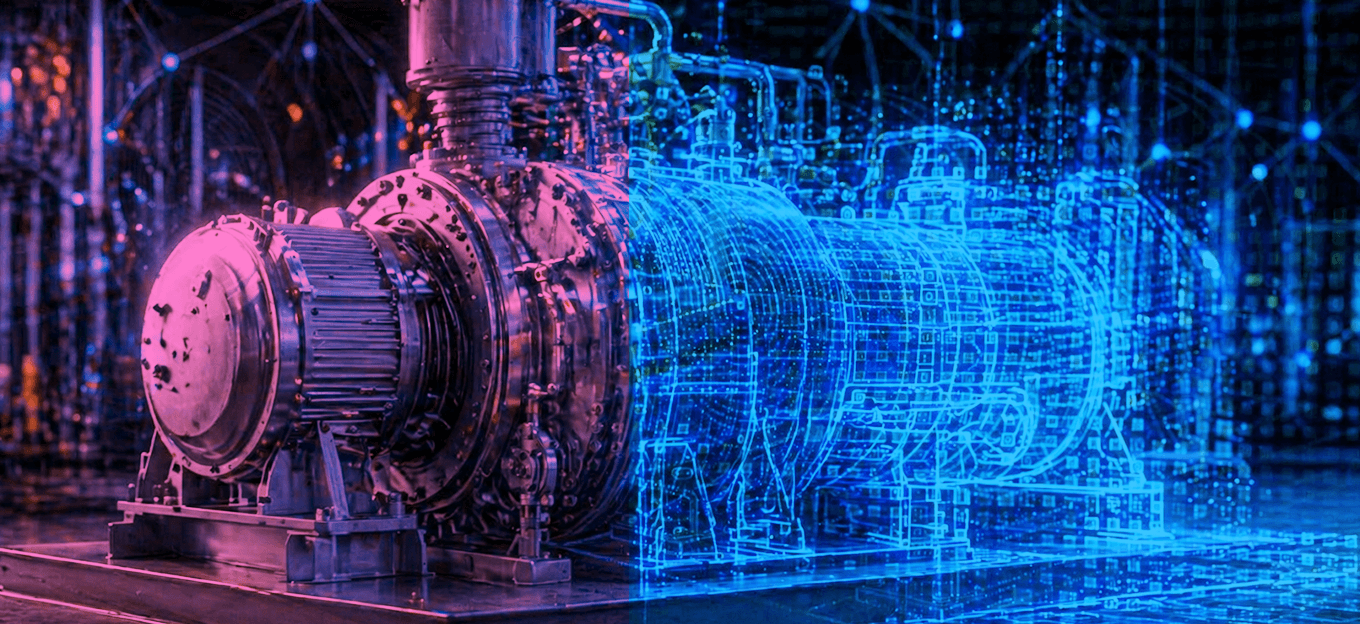

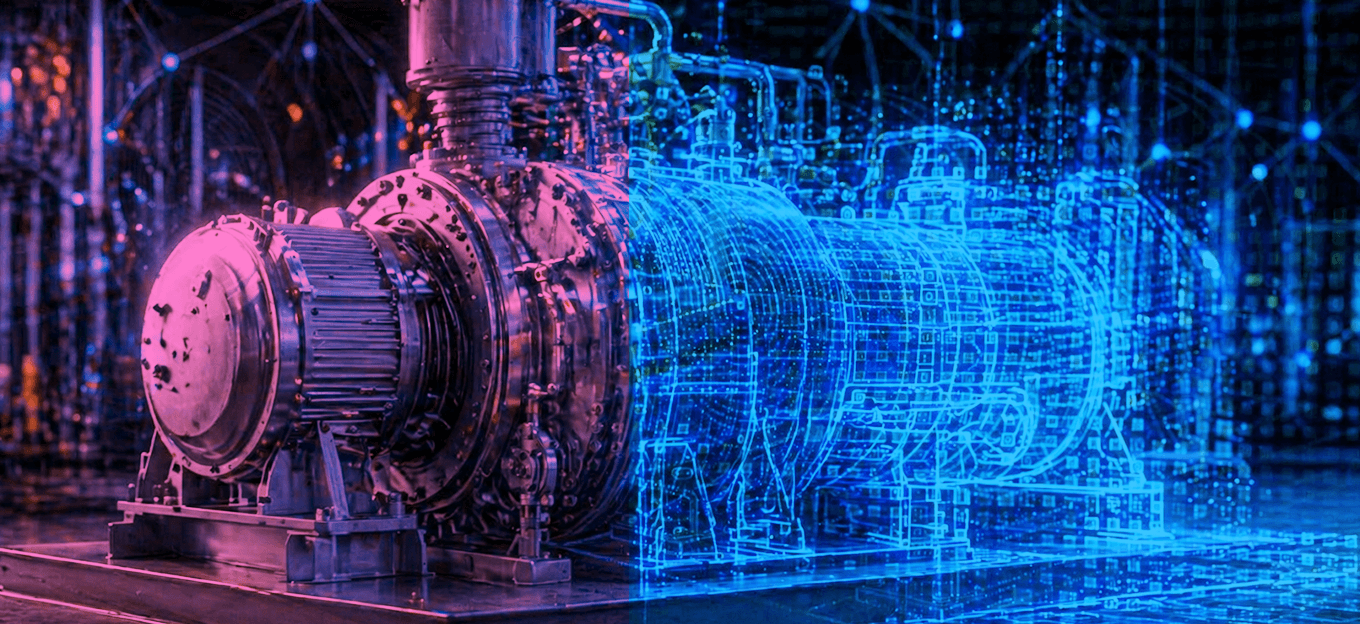

A digital twin is a dynamic, real-time virtual representation of a physical asset, system, process, or environment. The keyword is dynamic. A static 3D model or a CAD rendering is not a digital twin.

What makes a twin a twin is the live data connection, the ability to reflect what's actually happening in the physical world, simulate outcomes, and feed insights back into decision-making.

The three core components that make a digital twin functional are a physical entity being mirrored, a digital model of that entity, and a continuous data connection between the two.

Remove any one of those, and you no longer have a digital twin. You have a dashboard or a simulation, both useful, but fundamentally different.

For C-level leaders, this distinction matters. When evaluating vendor proposals or internal initiatives, the question to ask is always: what data is flowing, how often, and what does the system do with it?

Why Digital Twin Strategy Is a C-Level Conversation

Digital twins are sometimes framed as an IT initiative or an engineering project. That framing is one of the most common reasons implementations stall.

The decisions involved in building and scaling a digital twin touch every corner of an organization. Data governance, system integration, workforce readiness, capital allocation, and vendor relationships are all in play.

These are not decisions that sit comfortably within a single department. They require cross-functional authority, which means they require executive ownership.

There's also a competitive dimension that is impossible to ignore. Manufacturers using digital twins are reducing unplanned downtime by significant margins.

Healthcare organizations are running clinical simulations before committing to infrastructure investments. Logistics companies are rerouting supply chains in real time based on live twin data. If you're not moving in this direction, someone in your market probably is.

Keeping up with the latest digital twin trends is no longer optional for leadership teams that want to stay ahead of how industries are evolving. The pace of development in this space has accelerated, and what looked like a three-to-five-year horizon in 2021 is happening now.

The Strategic Phases of Digital Twin Implementation

Phase 1: Define the Business Problem, Not the Technology Solution

The most expensive mistake organizations make is starting with the technology and working backward to a use case.

It sounds obvious when stated plainly, but it happens constantly, usually because a vendor demo was impressive or a competitor made news with a digital twin announcement.

An effective digital twin strategy starts with a narrow, painful, well-understood business problem. What is costing you money that you cannot currently explain or predict? Where are your operational blind spots? What decisions would you make differently if you had better real-time visibility?

For a utility company, that problem might be aging grid infrastructure and reactive maintenance costs. For a pharmaceutical manufacturer, it might be batch failure rates that don't have a clear root cause. For a smart building operator, it might be energy inefficiency driven by manual HVAC scheduling.

The business problem shapes everything that follows. It determines which assets to twin first, what data to prioritize, what success looks like, and how to build the business case for further investment.

Phase 2: Assess Your Data Foundation

Digital twins are only as intelligent as the data feeding them. Before committing to any implementation, leadership needs an honest assessment of the organization's current data infrastructure.

This means understanding what sensor data you're already collecting and at what fidelity, where your operational and enterprise data currently lives, how clean and standardized that data is, and whether your integration architecture can support real-time data flows.

Many organizations discover during this assessment that they have significant data gaps. Older equipment may not be instrumented.

Legacy systems may not expose APIs. Data from different business units may be formatted inconsistently. These are not dealbreakers, but they are scope considerations that need to go into the implementation plan and the budget.

Rushing past the data assessment phase is one of the clearest predictors of digital twin project failure. A twin that's fed incomplete or unreliable data will generate insights that undermine trust in the system, and once that trust is lost, rebuilding it is harder than starting over.

Phase 3: Choose the Right Implementation Model

Not every organization needs to build a digital twin capability from scratch. The implementation model you choose should align with your existing technical capabilities, your timeline, and the complexity of what you're trying to achieve.

There are generally three paths. The first is a build-led approach, where your internal engineering team owns the architecture and development. This offers maximum control but requires significant technical depth and takes longer to reach value.

The second is a platform-led approach, where you adopt an existing digital twin platform (PTC, Siemens, Microsoft Azure Digital Twins, AWS IoT TwinMaker, and others compete here) and configure it to your use case. This is faster but introduces dependency on a vendor ecosystem and may require meaningful customization.

The third is a partner-led approach, where you engage a specialized digital twin service provider to architect, build, and in some cases manage the twin on your behalf.

This is particularly valuable when you need to move quickly, when the internal expertise isn't there yet, or when the use case is complex enough to benefit from domain experience.

Most large-scale implementations end up as some combination of these. The platform provides the infrastructure layer; a partner handles implementation and integration; the internal team owns operations and continuous improvement.

Phase 4: Pilot with Rigor, Not Just Enthusiasm

Piloting is where good intentions meet operational reality. A well-designed pilot does three things. It validates the technical architecture against real-world conditions.

It generates early ROI evidence that can fund and justify scale. And it surfaces the organizational change management challenges before they become enterprise-wide problems.

A poorly designed pilot, by contrast, is just expensive experimentation. It has no clear success metrics, a timeline that stretches indefinitely, and stakeholders who lose interest before any results emerge.

For the pilot to be meaningful, leadership should define quantitative success criteria before the pilot starts. What specific metric needs to move, by how much, and over what time period?

If you're piloting a predictive maintenance use case, perhaps the measure is a reduction in unplanned downtime on a specific production line. If it's an energy optimization use case, perhaps it's a measurable reduction in energy cost per square foot in a specific facility.

The pilot should also be large enough to generate statistically meaningful data but small enough to manage. A single facility, a specific product line, or a defined segment of your logistics network is usually the right scope.

Phase 5: Build for Scale from Day One

One of the most common regrets expressed by organizations that have been through digital twin implementations is this: they built a pilot that worked beautifully and then discovered that scaling it required rebuilding it almost entirely.

This happens when the pilot is treated as a standalone project rather than as the first module of an enterprise system. The decisions made during the pilot, around data architecture, APIs, integration patterns, and access controls, need to reflect the eventual scaled state, not just the immediate use case.

This doesn't mean over-engineering the pilot. It means making deliberate architectural decisions with scale in mind and documenting the assumptions that would need to change as you grow. If your pilot twins one factory, your architecture should already account for what happens when you twin ten.

The Organizational Challenges Leadership Must Own

Data Governance and Ownership

As digital twins consolidate data from multiple systems, the question of who owns that data, who can access it, and who is accountable for its accuracy quickly becomes politically charged. Establishing data governance policies before implementation begins prevents the turf battles that slow down scaling.

Change Management Across Functions

Operations teams, maintenance crews, and facility managers interact with digital twins very differently from how executive dashboards do. The user experience layer matters.

If the people closest to the physical assets don't trust the twins' outputs or don't know how to act on them, the technology fails regardless of how sophisticated the back end is.

Vendor and Technology Lock-In

The digital twin platform market is still maturing, and some platforms make it difficult to migrate data or integrate with best-of-breed tools outside their ecosystem.

C-level leaders should ask hard questions about data portability, API openness, and long-term licensing models before signing multi-year platform commitments.

Measuring ROI at Scale

ROI measurement for digital twins is genuinely complex, and anyone who offers you a simple formula is oversimplifying. The value often emerges from multiple compounding effects: reduced downtime, lower maintenance costs, faster product development cycles, energy savings, fewer regulatory compliance events, and better capital allocation decisions.

The most credible ROI frameworks tie twin outputs directly to operational KPIs that already exist in your reporting structure. Don't create new metrics to justify the investment. Instead, show how the twin changes outcomes on metrics leadership already cares about.

As you scale, the ROI story often gets stronger, not weaker, because the marginal cost of adding new assets or processes to an existing twin infrastructure is significantly lower than the initial build. That cost curve is an important part of how to frame the long-term business case.

What Separates Leaders from Laggards

Organizations that get the most out of digital twin investments share a few consistent characteristics.

They treat the digital twin as a living system, not a project with a completion date. Continuous refinement of models, ongoing data quality management, and iterative improvement of the analytics layer are built into the operating model from the start.

They invest in the human layer alongside the technology layer. The engineers, data scientists, and operations managers who work with twins daily need ongoing training and feedback loops that allow them to surface model failures and edge cases.

And they think ecosystemically. The most mature digital twin implementations connect twins across organizational boundaries, with suppliers, customers, regulators, and infrastructure providers. That's where the network effects of the technology really begin to show.

Final Thoughts for the C-Suite

Digital twin strategy is not a technology decision. It's a business transformation decision that happens to involve technology.

The organizations that approach it that way, with executive sponsorship, clear business problem framing, rigorous data foundations, and a scaling architecture designed from day one, are the ones generating measurable competitive advantage.

The ones that treat it as an IT project or a pilot-and-forget initiative are burning budget and credibility, and falling further behind.

If you're at the stage of building a business case or evaluating implementation options, the single most valuable thing you can do is start with the problem, not the platform. Everything else flows from that discipline.

The technology has matured enough that the barrier to entry is no longer technical. It's strategic. And that puts the decision exactly where it belongs: in the hands of leadership.

The Most Comprehensive IoT Newsletter for Enterprises

Showcasing the highest-quality content, resources, news, and insights from the world of the Internet of Things. Subscribe to remain informed and up-to-date.

New Podcast Episode

Can AI Design IoT Hardware?

Related Articles